Search by keywords or author

Journals > > Topics > Imaging Systems, Microscopy, and Displays

Imaging Systems, Microscopy, and Displays|77 Article(s)

Varifocal occlusion in an optical see-through near-eye display with a single phase-only liquid crystal on silicon

Woongseob Han, Jae-Won Lee, Jung-Yeop Shin, Myeong-Ho Choi, Hak-Rin Kim, and Jae-Hyeung Park

We propose a near-eye display optics system that supports three-dimensional mutual occlusion. By exploiting the polarization-control properties of a phase-only liquid crystal on silicon (LCoS), we achieve real see-through scene masking as well as virtual digital scene imaging using a single LCoS. Dynamic depth control of the real scene mask and virtual digital image is also achieved by using a focus tunable lens (FTL) pair of opposite curvatures. The proposed configuration using a single LCoS and opposite curvature FTL pair enables the self-alignment of the mask and image at an arbitrary depth without distorting the see-through view of the real scene. We verified the feasibility of the proposed optics using two optical benchtop setups: one with two off-the-shelf FTLs for continuous depth control, and the other with a single Pancharatnam–Berry phase-type FTL for the improved form factor. We propose a near-eye display optics system that supports three-dimensional mutual occlusion. By exploiting the polarization-control properties of a phase-only liquid crystal on silicon (LCoS), we achieve real see-through scene masking as well as virtual digital scene imaging using a single LCoS. Dynamic depth control of the real scene mask and virtual digital image is also achieved by using a focus tunable lens (FTL) pair of opposite curvatures. The proposed configuration using a single LCoS and opposite curvature FTL pair enables the self-alignment of the mask and image at an arbitrary depth without distorting the see-through view of the real scene. We verified the feasibility of the proposed optics using two optical benchtop setups: one with two off-the-shelf FTLs for continuous depth control, and the other with a single Pancharatnam–Berry phase-type FTL for the improved form factor.

Photonics Research

- Publication Date: Apr. 01, 2024

- Vol. 12, Issue 4, 833 (2024)

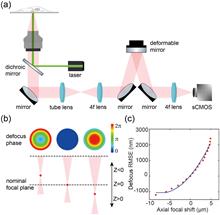

Aberration correction for deformable-mirror-based remote focusing enables high-accuracy whole-cell super-resolution imaging

Wei Shi, Yingchuan He, Jianlin Wang, Lulu Zhou, Jianwei Chen, Liwei Zhou, Zeyu Xi, Zhen Wang, Ke Fang, and Yiming Li

Single-molecule localization microscopy (SMLM) enables three-dimensional (3D) investigation of nanoscale structures in biological samples, offering unique insights into their organization. However, traditional 3D super-resolution microscopy using high numerical aperture (NA) objectives is limited by imaging depth of field (DOF), restricting their practical application to relatively thin biological samples. Here, we developed a unified solution for thick sample super-resolution imaging using a deformable mirror (DM) which served for fast remote focusing, optimized point spread function (PSF) engineering, and accurate aberration correction. By effectively correcting the system aberrations introduced during remote focusing and sample aberrations at different imaging depths, we achieved high-accuracy, large DOF imaging (∼8 μm) of the whole-cell organelles [i.e., nuclear pore complex (NPC), microtubules, and mitochondria] with a nearly uniform resolution of approximately 35 nm across the entire cellular volume. Single-molecule localization microscopy (SMLM) enables three-dimensional (3D) investigation of nanoscale structures in biological samples, offering unique insights into their organization. However, traditional 3D super-resolution microscopy using high numerical aperture (NA) objectives is limited by imaging depth of field (DOF), restricting their practical application to relatively thin biological samples. Here, we developed a unified solution for thick sample super-resolution imaging using a deformable mirror (DM) which served for fast remote focusing, optimized point spread function (PSF) engineering, and accurate aberration correction. By effectively correcting the system aberrations introduced during remote focusing and sample aberrations at different imaging depths, we achieved high-accuracy, large DOF imaging (∼8 μm) of the whole-cell organelles [i.e., nuclear pore complex (NPC), microtubules, and mitochondria] with a nearly uniform resolution of approximately 35 nm across the entire cellular volume.

Photonics Research

- Publication Date: Apr. 01, 2024

- Vol. 12, Issue 4, 821 (2024)

Deep correlated speckles: suppressing correlation fluctuation and optical diffraction

Xiaoyu Nie, Haotian Song, Wenhan Ren, Zhedong Zhang, Tao Peng, and Marlan O. Scully

The generation of speckle patterns via random matrices, statistical definitions, or apertures may not always result in optimal outcomes. Issues such as correlation fluctuations in low ensemble numbers and diffraction in long-distance propagation can arise. Instead of improving results of specific applications, our solution is catching deep correlations of patterns with the framework, Speckle-Net, which is fundamental and universally applicable to various systems. We demonstrate this in computational ghost imaging (CGI) and structured illumination microscopy (SIM). In CGI with extremely low ensemble number, it customizes correlation width and minimizes correlation fluctuations in illuminating patterns to achieve higher-quality images. It also creates non-Rayleigh nondiffracting speckle patterns only through a phase mask modulation, which overcomes the power loss in the traditional ring-aperture method. Our approach provides new insights into the nontrivial speckle patterns and has great potential for a variety of applications including dynamic SIM, X-ray and photo-acoustic imaging, and disorder physics. The generation of speckle patterns via random matrices, statistical definitions, or apertures may not always result in optimal outcomes. Issues such as correlation fluctuations in low ensemble numbers and diffraction in long-distance propagation can arise. Instead of improving results of specific applications, our solution is catching deep correlations of patterns with the framework, Speckle-Net, which is fundamental and universally applicable to various systems. We demonstrate this in computational ghost imaging (CGI) and structured illumination microscopy (SIM). In CGI with extremely low ensemble number, it customizes correlation width and minimizes correlation fluctuations in illuminating patterns to achieve higher-quality images. It also creates non-Rayleigh nondiffracting speckle patterns only through a phase mask modulation, which overcomes the power loss in the traditional ring-aperture method. Our approach provides new insights into the nontrivial speckle patterns and has great potential for a variety of applications including dynamic SIM, X-ray and photo-acoustic imaging, and disorder physics.

Photonics Research

- Publication Date: Apr. 01, 2024

- Vol. 12, Issue 4, 804 (2024)

Faster structured illumination microscopy using complementary encoding-based compressive imaging

Zhengqi Huang, Yunhua Yao, Yilin He, Yu He, Chengzhi Jin, Mengdi Guo, Dalong Qi, Lianzhong Deng, Zhenrong Sun, Zhiyong Wang, and Shian Zhang

Structured illumination microscopy (SIM) has been widely applied to investigate intricate biological dynamics due to its outstanding super-resolution imaging speed. Incorporating compressive sensing into SIM brings the possibility to further improve the super-resolution imaging speed. Nevertheless, the recovery of the super-resolution information from the compressed measurement remains challenging in experiments. Here, we report structured illumination microscopy with complementary encoding-based compressive imaging (CECI-SIM) to realize faster super-resolution imaging. Compared to the nine measurements to obtain a super-resolution image in a conventional SIM, CECI-SIM can achieve a super-resolution image by three measurements; therefore, a threefold improvement in the imaging speed can be achieved. This faster imaging ability in CECI-SIM is experimentally verified by observing tubulin and actin in mouse embryonic fibroblast cells. This work provides a feasible solution for high-speed super-resolution imaging, which would bring significant applications in biomedical research. Structured illumination microscopy (SIM) has been widely applied to investigate intricate biological dynamics due to its outstanding super-resolution imaging speed. Incorporating compressive sensing into SIM brings the possibility to further improve the super-resolution imaging speed. Nevertheless, the recovery of the super-resolution information from the compressed measurement remains challenging in experiments. Here, we report structured illumination microscopy with complementary encoding-based compressive imaging (CECI-SIM) to realize faster super-resolution imaging. Compared to the nine measurements to obtain a super-resolution image in a conventional SIM, CECI-SIM can achieve a super-resolution image by three measurements; therefore, a threefold improvement in the imaging speed can be achieved. This faster imaging ability in CECI-SIM is experimentally verified by observing tubulin and actin in mouse embryonic fibroblast cells. This work provides a feasible solution for high-speed super-resolution imaging, which would bring significant applications in biomedical research.

Photonics Research

- Publication Date: Mar. 25, 2024

- Vol. 12, Issue 4, 740 (2024)

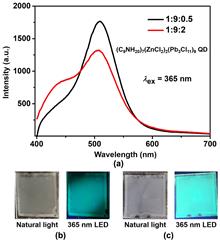

Lensless opto-electronic neural network with quantum dot nonlinear activation

Wanxin Shi, Xi Jiang, Zheng Huang, Xue Li, Yuyang Han, Sigang Yang, Haizheng Zhong, and Hongwei Chen

With the swift advancement of neural networks and their expanding applications in many fields, optical neural networks have gradually become a feasible alternative to electrical neural networks due to their parallelism, high speed, low latency, and power consumption. Nonetheless, optical nonlinearity is hard to realize in free-space optics, which restricts the potential of the architecture. To harness the benefits of optical parallelism while ensuring compatibility with natural light scenes, it becomes essential to implement two-dimensional spatial nonlinearity within an incoherent light environment. Here, we demonstrate a lensless opto-electrical neural network that incorporates optical nonlinearity, capable of performing convolution calculations and achieving nonlinear activation via a quantum dot film, all without an external power supply. Through simulation and experiments, the proposed nonlinear system can enhance the accuracy of image classification tasks, yielding a maximum improvement of 5.88% over linear models. The scheme shows a facile implementation of passive incoherent two-dimensional nonlinearities, paving the way for the applications of multilayer incoherent optical neural networks in the future. With the swift advancement of neural networks and their expanding applications in many fields, optical neural networks have gradually become a feasible alternative to electrical neural networks due to their parallelism, high speed, low latency, and power consumption. Nonetheless, optical nonlinearity is hard to realize in free-space optics, which restricts the potential of the architecture. To harness the benefits of optical parallelism while ensuring compatibility with natural light scenes, it becomes essential to implement two-dimensional spatial nonlinearity within an incoherent light environment. Here, we demonstrate a lensless opto-electrical neural network that incorporates optical nonlinearity, capable of performing convolution calculations and achieving nonlinear activation via a quantum dot film, all without an external power supply. Through simulation and experiments, the proposed nonlinear system can enhance the accuracy of image classification tasks, yielding a maximum improvement of 5.88% over linear models. The scheme shows a facile implementation of passive incoherent two-dimensional nonlinearities, paving the way for the applications of multilayer incoherent optical neural networks in the future.

Photonics Research

- Publication Date: Mar. 21, 2024

- Vol. 12, Issue 4, 682 (2024)

Full-polarization angular spectrum modeling of scattered light modulation

Rongjun Shao, Chunxu Ding, Yuan Qu, Linxian Liu, Qiaozhi He, Yuejun Wu, and Jiamiao Yang

The exact physical modeling for scattered light modulation is critical in phototherapy, biomedical imaging, and free-space optical communications. In particular, the angular spectrum modeling of scattered light has attracted considerable attention, but the existing angular spectrum models neglect the polarization of photons, degrading their performance. Here, we propose a full-polarization angular spectrum model (fpASM) to take the polarization into account. This model involves a combination of the optical field changes and free-space angular spectrum diffraction, and enables an investigation of the influence of polarization-related factors on the performance of scattered light modulation. By establishing the relationship between various model parameters and macroscopic scattering properties, our model can effectively characterize various depolarization conditions. As a demonstration, we apply the model in the time-reversal data transmission and anti-scattering light focusing. Our method allows the analysis of various depolarization scattering events and benefits applications related to scattered light modulation. The exact physical modeling for scattered light modulation is critical in phototherapy, biomedical imaging, and free-space optical communications. In particular, the angular spectrum modeling of scattered light has attracted considerable attention, but the existing angular spectrum models neglect the polarization of photons, degrading their performance. Here, we propose a full-polarization angular spectrum model (fpASM) to take the polarization into account. This model involves a combination of the optical field changes and free-space angular spectrum diffraction, and enables an investigation of the influence of polarization-related factors on the performance of scattered light modulation. By establishing the relationship between various model parameters and macroscopic scattering properties, our model can effectively characterize various depolarization conditions. As a demonstration, we apply the model in the time-reversal data transmission and anti-scattering light focusing. Our method allows the analysis of various depolarization scattering events and benefits applications related to scattered light modulation.

Photonics Research

- Publication Date: Mar. 01, 2024

- Vol. 12, Issue 3, 485 (2024)

Deep learning-based optical aberration estimation enables offline digital adaptive optics and super-resolution imaging|On the Cover

Chang Qiao, Haoyu Chen, Run Wang, Tao Jiang, Yuwang Wang, and Dong Li

Optical aberrations degrade the performance of fluorescence microscopy. Conventional adaptive optics (AO) leverages specific devices, such as the Shack–Hartmann wavefront sensor and deformable mirror, to measure and correct optical aberrations. However, conventional AO requires either additional hardware or a more complicated imaging procedure, resulting in higher cost or a lower acquisition speed. In this study, we proposed a novel space-frequency encoding network (SFE-Net) that can directly estimate the aberrated point spread functions (PSFs) from biological images, enabling fast optical aberration estimation with high accuracy without engaging extra optics and image acquisition. We showed that with the estimated PSFs, the optical aberration can be computationally removed by the deconvolution algorithm. Furthermore, to fully exploit the benefits of SFE-Net, we incorporated the estimated PSF with neural network architecture design to devise an aberration-aware deep-learning super-resolution model, dubbed SFT-DFCAN. We demonstrated that the combination of SFE-Net and SFT-DFCAN enables instant digital AO and optical aberration-aware super-resolution reconstruction for live-cell imaging. Optical aberrations degrade the performance of fluorescence microscopy. Conventional adaptive optics (AO) leverages specific devices, such as the Shack–Hartmann wavefront sensor and deformable mirror, to measure and correct optical aberrations. However, conventional AO requires either additional hardware or a more complicated imaging procedure, resulting in higher cost or a lower acquisition speed. In this study, we proposed a novel space-frequency encoding network (SFE-Net) that can directly estimate the aberrated point spread functions (PSFs) from biological images, enabling fast optical aberration estimation with high accuracy without engaging extra optics and image acquisition. We showed that with the estimated PSFs, the optical aberration can be computationally removed by the deconvolution algorithm. Furthermore, to fully exploit the benefits of SFE-Net, we incorporated the estimated PSF with neural network architecture design to devise an aberration-aware deep-learning super-resolution model, dubbed SFT-DFCAN. We demonstrated that the combination of SFE-Net and SFT-DFCAN enables instant digital AO and optical aberration-aware super-resolution reconstruction for live-cell imaging.

Photonics Research

- Publication Date: Mar. 01, 2024

- Vol. 12, Issue 3, 474 (2024)

Simultaneous dual-region two-photon imaging of biological dynamics spanning over 9 mm in vivo

Chi Liu, Cheng Jin, Junhao Deng, Junhao Liang, Licheng Zhang, and Lingjie Kong

Biodynamical processes, especially in system biology, that occur far apart in space may be highly correlated. To study such biodynamics, simultaneous imaging over a large span at high spatio-temporal resolutions is highly desired. For example, large-scale recording of neural network activities over various brain regions is indispensable in neuroscience. However, limited by the field-of-view (FoV) of conventional microscopes, simultaneous recording of laterally distant regions at high spatio-temporal resolutions is highly challenging. Here, we propose to extend the distance of simultaneous recording regions with a custom micro-mirror unit, taking advantage of the long working distance of the objective and spatio-temporal multiplexing. We demonstrate simultaneous dual-region two-photon imaging, spanning as large as 9 mm, which is 4 times larger than the nominal FoV of the objective. We verify the system performance in in vivo imaging of neural activities and vascular dilations, simultaneously, at two regions in mouse brains as well as in spinal cords, respectively. The adoption of our proposed scheme will promote the study of systematic biology, such as system neuroscience and system immunology. Biodynamical processes, especially in system biology, that occur far apart in space may be highly correlated. To study such biodynamics, simultaneous imaging over a large span at high spatio-temporal resolutions is highly desired. For example, large-scale recording of neural network activities over various brain regions is indispensable in neuroscience. However, limited by the field-of-view (FoV) of conventional microscopes, simultaneous recording of laterally distant regions at high spatio-temporal resolutions is highly challenging. Here, we propose to extend the distance of simultaneous recording regions with a custom micro-mirror unit, taking advantage of the long working distance of the objective and spatio-temporal multiplexing. We demonstrate simultaneous dual-region two-photon imaging, spanning as large as 9 mm, which is 4 times larger than the nominal FoV of the objective. We verify the system performance in in vivo imaging of neural activities and vascular dilations, simultaneously, at two regions in mouse brains as well as in spinal cords, respectively. The adoption of our proposed scheme will promote the study of systematic biology, such as system neuroscience and system immunology.

Photonics Research

- Publication Date: Feb. 26, 2024

- Vol. 12, Issue 3, 456 (2024)

Deep optics preconditioner for modulation-free pyramid wavefront sensing

Felipe Guzmán, Jorge Tapia, Camilo Weinberger, Nicolás Hernández, Jorge Bacca, Benoit Neichel, and Esteban Vera

The pyramid wavefront sensor (PWFS) can provide the sensitivity needed for demanding adaptive optics applications, such as imaging exoplanets using the future extremely large telescopes of over 30 m of diameter (D). However, its exquisite sensitivity has a limited linear range of operation, or dynamic range, although it can be extended through the use of beam modulation—despite sacrificing sensitivity and requiring additional optical hardware. Inspired by artificial intelligence techniques, this work proposes to train an optical layer—comprising a passive diffractive element placed at a conjugated Fourier plane of the pyramid prism—to boost the linear response of the pyramid sensor without the need for cumbersome modulation. We develop an end-2-end simulation to train the diffractive element, which acts as an optical preconditioner to the traditional least-square modal phase estimation process. Simulation results with a large range of turbulence conditions show a noticeable improvement in the aberration estimation performance equivalent to over 3λ/D of modulation when using the optically preconditioned deep PWFS (DPWFS). Experimental results validate the advantages of using the designed optical layer, where the DPWFS can pair the performance of a traditional PWFS with 2λ/D of modulation. Designing and adding an optical preconditioner to the PWFS is just the tip of the iceberg, since the proposed deep optics methodology can be used for the design of a completely new generation of wavefront sensors that can better fit the demands of sophisticated adaptive optics applications such as ground-to-space and underwater optical communications and imaging through scattering media. The pyramid wavefront sensor (PWFS) can provide the sensitivity needed for demanding adaptive optics applications, such as imaging exoplanets using the future extremely large telescopes of over 30 m of diameter (D). However, its exquisite sensitivity has a limited linear range of operation, or dynamic range, although it can be extended through the use of beam modulation—despite sacrificing sensitivity and requiring additional optical hardware. Inspired by artificial intelligence techniques, this work proposes to train an optical layer—comprising a passive diffractive element placed at a conjugated Fourier plane of the pyramid prism—to boost the linear response of the pyramid sensor without the need for cumbersome modulation. We develop an end-2-end simulation to train the diffractive element, which acts as an optical preconditioner to the traditional least-square modal phase estimation process. Simulation results with a large range of turbulence conditions show a noticeable improvement in the aberration estimation performance equivalent to over 3λ/D of modulation when using the optically preconditioned deep PWFS (DPWFS). Experimental results validate the advantages of using the designed optical layer, where the DPWFS can pair the performance of a traditional PWFS with 2λ/D of modulation. Designing and adding an optical preconditioner to the PWFS is just the tip of the iceberg, since the proposed deep optics methodology can be used for the design of a completely new generation of wavefront sensors that can better fit the demands of sophisticated adaptive optics applications such as ground-to-space and underwater optical communications and imaging through scattering media.

Photonics Research

- Publication Date: Feb. 01, 2024

- Vol. 12, Issue 2, 301 (2024)

Reflective ultrathin light-sheet microscopy with isotropic 3D resolutions|Spotlight on Optics

Yue Wang, Dashan Dong, Wenkai Yang, Renxi He, Ming Lei, and Kebin Shi

Light-sheet fluorescence microscopy (LSFM) has played an important role in bio-imaging due to its advantages of high photon efficiency, fast speed, and long-term imaging capabilities. The perpendicular layout between LSFM excitation and detection often limits the 3D resolutions as well as their isotropy. Here, we report on a reflective type light-sheet microscope with a mini-prism used as an optical path reflector. The conventional high NA objectives can be used both in excitation and detection with this design. Isotropic resolutions in 3D down to 300 nm could be achieved without deconvolution. The proposed method also enables easy transform of a conventional fluorescence microscope to high performance light-sheet microscopy. Light-sheet fluorescence microscopy (LSFM) has played an important role in bio-imaging due to its advantages of high photon efficiency, fast speed, and long-term imaging capabilities. The perpendicular layout between LSFM excitation and detection often limits the 3D resolutions as well as their isotropy. Here, we report on a reflective type light-sheet microscope with a mini-prism used as an optical path reflector. The conventional high NA objectives can be used both in excitation and detection with this design. Isotropic resolutions in 3D down to 300 nm could be achieved without deconvolution. The proposed method also enables easy transform of a conventional fluorescence microscope to high performance light-sheet microscopy.

Photonics Research

- Publication Date: Feb. 01, 2024

- Vol. 12, Issue 2, 271 (2024)

Topics